Building a Serverless Event-Driven ETL Pipeline for Financial Analysis with AWS

Reading time: 5 minutes

While many investors prefer a passive approach, I am drawn to digging into the numbers myself. I have always been captivated by finance and analysis of public companies after all. But when I tried tracking financial data for over 14,000 companies, it quickly overwhelmed me.

I had built a basic system on my desktop that could gather the data I needed a few years ago, but it was far from ideal: I would have to manually start it, then leave my computer running for days, and repeat the process whenever data got outdated.

Moreover, I have recently completed my AWS Solutions Architect certification, which has provided an updated perspective on building scalable, cloud-based solutions. That is when I decided to create a data product that could automate and streamline the analysis, saving me time and keeping me informed about new opportunities effortlessly.

To achieve this, I opted for an event-driven architecture that is completely serverless. This approach not only reduced costs but also allowed me to focus on building a scalable and reliable system. The majority of the pipeline falls within the free tier of AWS, with costs amounting to just a few cents per month.

System Overview

My first priority was to keep development and maintenance costs in check. I did not want this to be a product that would cost me a fortune to run and would continuously drag me in for 'quick-fixes'. To avoid this, I chose an event-driven, serverless architecture leveraging AWS managed services. This approach allows my solution to scale without requiring servers to be constantly running—saving me a lot on cloud costs.

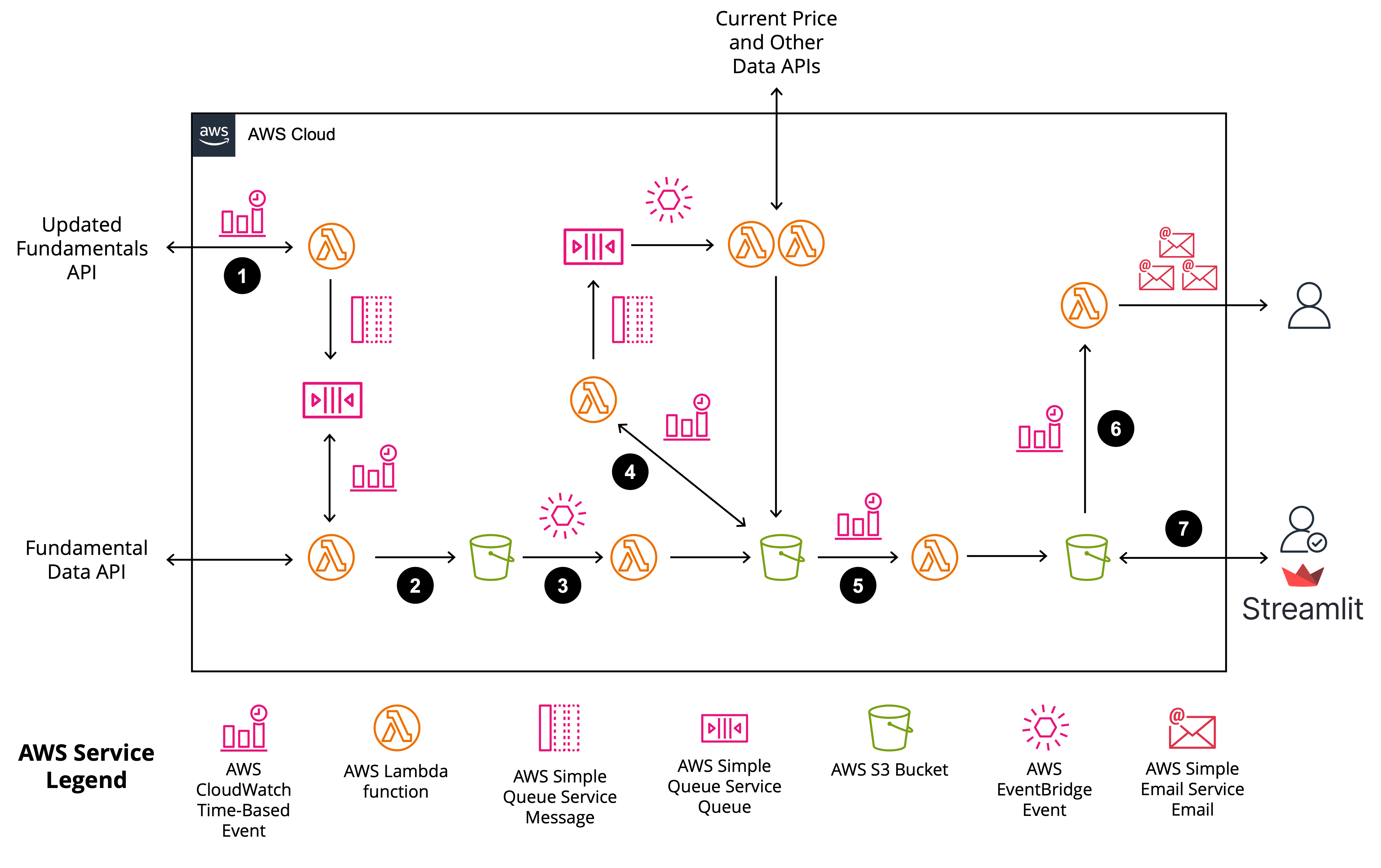

Here is a breakdown of how the system functions, with the list numbers aligning with the steps detailed in Figure 1:

- Weekly Monitoring for New Data: Every week, the system automatically checks for newly released financial statements. If there is new data, it sends a message to an Amazon SQS queue with the company's ISIN (unique identifier).

- Fetching New Statements: The system regularly checks the queue and downloads any new financial statements to a "raw data" storage area.

- Automated ETL Process: Each time new data is added, an S3 event triggers an AWS Lambda function that runs an ETL (extract, transform, load) process. This function pulls out key KPIs needed for financial analysis and saves this refined data to a staging area.

- Data Enrichment: Once a week, a set of Lambda functions are triggered to download current price data and other details to enrich the financial information.

- Consolidation for Analysis: The system then consolidates all necessary data in the staging area and saves it to the "processed data" area, ensuring I am always working with the latest information.

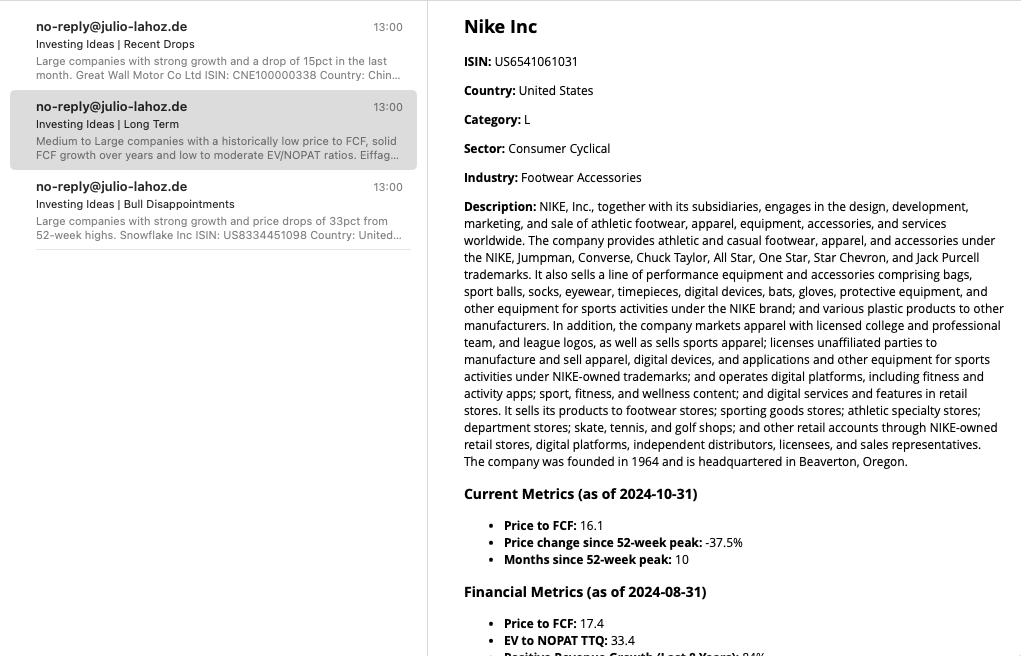

- Spotting Investment Opportunities: Each time the data is updated, a Lambda function screens companies based on criteria I set—such as low price-to-free-cash-flow ratios or strong growth with recent price drops. This produces a shortlist of potential opportunities, which is emailed to me (see Figure 2.).

- Dashboard Access: Finally, whenever I want to dig deeper, I can authenticate and pull the processed data into a local dashboard built with Streamlit. This allows me to review the email alerts in more detail and explore companies that show promise.

Technology Used

AWS:

The following AWS services were used: S3, Lambda, SQS (Simple Queue Service), SES (Simple Email Service), CloudWatch, and EventBridge.

Python:

Used to interact with APIs, write the ETL pipelines, and lambda functions.

Terraform:

Terraform was essential for this project, as it allowed me to define and deploy my infrastructure as code. This approach made it easy to modify and scale the system while minimizing the risk of forgotten infrastructure causing unexpected costs.

Streamlit:

Streamlit was used to create the dashboard for in-depth analysis, providing an interactive and user-friendly interface for exploring financial data (see Figure 3.).

AI (sonnet-3.5 and gpt-4o models):

AI became my "junior developer" throughout this project. It helped with repetitive tasks and boilerplate code, giving me more time to focus on architecture and review. This was especially helpful when working with Terraform, which I had not used in a couple of years.

Costs and Return on Investment

Building this product took around 70% of my time over three weeks. Estimating my time at €100 per hour, the human cost was roughly €8,400. Cloud costs remain negligible, thanks to AWS's free tier and efficient architecture, with only a few cents spent each month.

Of course, there was an opportunity cost—I could have worked on other projects during this time. While financial returns are yet to be seen, I believe this project has the highest potential ROI for the following two reasons:

- Professional Portfolio: This project demonstrates my ability to design cost-effective, scalable systems quickly. As I have managed a 25-people team in my previous role, clients may assume I am disconnected from the technology. While my specialty remains Data Strategy and Project Management, this project shows my technical skills are still sharp. Thus, this prototype may open doors to new freelance projects.

- Investment Insights: This tool gives me an edge in spotting high-potential investments that could eventually cover the initial development costs many times over.

In conclusion, creating this data product has been a rewarding journey. By leveraging AWS and AI, I have built a scalable, efficient solution that lets me analyze companies more effectively than ever.

Prototype information

- Industry: Finance

- Category: Cloud Development, ETL

- Prototype date: Nov 2024

This prototype centers on building an automated data product for financial analysis of over 14,000 public companies. Utilizing a serverless, event-driven architecture, the system performs weekly checks for new financial statements, downloads relevant data, and processes it through an ETL pipeline. Data is enriched with current pricing information, consolidated for analysis, and screened for investment opportunities. Shortlisted companies are automatically emailed to me, and a Streamlit dashboard offers an interactive review of potential investments.

If you have any questions about the implementation, feel free to get in touch.

Disclaimer: The information included in this report is for informational purposes only and does not constitute investment advice.